TESTING IN A CROSS-CULTURAL TEAM

Every man is in certain respects a) like every other man, b) like some other man, c) like no other man.

-Kluckhohn & Murray

Whether you are working in IT or any other industry, communicating with people of differing cultures is extremely difficult. To be honest, it is much more difficult than solving a complex test automation problem! It often requires a broad angle of thinking and understanding. When a conflict arises in a cross-cultural team, the operational activities become even more complex. Without deep analysis and digestion of how cultures interoperate and create problems, theoretical management approaches often make things worse.

I am not sure how I linked cross-cultural phenomena to software testing, but I think they should be somehow related. At least human beings are main actors for both, right?

In order to make this correlation stronger, I will share an experience about a cross-cultural incident that happened in the past in which I was playing the lead and will try to convince you that this is a subject worth deeper investigation.

The incident was regarding the performance appraisal of a test consultant in one of my previous companies. Just after her second month in the division, we had gone through the mandatory process of performance evaluation. The problem occurred in our face-to-face meeting, as she told me that she was not satisfied with the grade I had given to her.

She continued by explaining how she was not aware of the expectations, how she was not involved in her target/score card creation, and how the grading criteria was not individual but was corporate-wide. She added that she was also willing to evaluate me as her supervisor, and she wanted me to explain to her what kind of performance management courses I had completed so far!

After I got this shocking response, I tried to explain to her the details about the current performance measurement system and in a way tried to convince her about the current system’s effectiveness and fairness. I also told her that we had no better option, and other firms are even worse. [I used a blend of two different rationalization techniques: “denial of responsibility” and “social weighting.” No chance for you to find these in a software testing book!]

After we finished and she regained her temper, I felt deeply guilty about my speech. I defended our way of measuring performance without exactly analyzing its deficiencies. As an ethical and detail-oriented person (which I believe myself to be), I decided to make a thorough investigation of our performance measurement system, aiming to uncover deficiencies from both logical and ethical perspectives.

As I had never been faced with any complaints about the performance system from my local staff before, I had thought that everything was fine and we were on the right track. With some rational thinking, I found several striking root causes and major deficiencies.

Let me try to emphasize the underlying causes which brought me to the complex situation:

• The consultant came from a country where, as I have gleaned from articles/studies, people are extremely attentive to punctuality. They tend to get annoyed when the timing is not accurate and they are not informed prior to any kind of occasion. Consequently, a sudden/quick performance evaluation can be disruptive, as timing/organization/agenda is expected to be very clear and smooth.

• She was coming from a large power distance culture.

• Performance measures are very critical for them to express themselves, and they tend to look for explicitly defined criteria in terms of owner, unit of measure, collection frequency, data attributes, expected values (targets), and thresholds.

• As people of this culture are usually very diplomatic and fair, they easily become dissatisfied when their feedback is not collected and their opinions are not valued on any issue (in our case, feedback/contributions about the performance measurement criteria).

• The current performance management system was very dependent on project scores and corporate goals. Individual items were generally missing on the target card. As these people are individualists rather than collectivists, they would be more satisfied with individual performance evaluation items rather than corporate/generic ones.

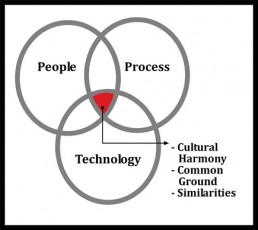

Unfortunately, these are not written in any software testing book, and if you do not pay attention to social matters as well as technical ones, you suffer a lot. My cross-cultural experiences taught me that! If you want to achieve a high organizational capability, you need to understand the importance of;

• Being aware of cross-cultural phenomenon (differences or misunderstandings)

• Taking responsibility for bridging the cultural gaps

• Training your staff on cultural differences

• Establishing both social and work-related activities for team members

• Developing more effective leadership by understanding the cultural dimensions of individualism vs. collectivism, masculinity vs. femininity, future orientation, power distance, and uncertainty avoidance.

Hopefully this section will trigger you to focus on the root causes and consequences of some organizational incidents by helping you to develop a cross-cultural point of view.

Some problems require special mind-set and experience. Technology cannot solve all your problems!